Why Most AI Product Development Pipelines Fail (And What Actually Works)

The artificial intelligence industry has developed an orthodox playbook for building intelligent products: assemble data science teams, invest in MLOps platforms, establish rigorous experimentation frameworks, and construct elaborate pipelines modeled after practices at technology giants. Yet despite following this conventional wisdom, most organizations struggle to move AI initiatives from proof-of-concept to production impact. The failure rate isn't just high; it's embarrassingly consistent across industries, company sizes, and use cases. After observing dozens of implementations across enterprise and startup contexts, a uncomfortable pattern emerges: the standard approach to AI product development fundamentally misunderstands how intelligent capabilities actually create value.

The problem isn't that teams lack technical sophistication or that AI Product Development Pipelines require capabilities beyond most organizations' reach. Rather, the conventional playbook optimizes for the wrong objectives, solves problems that don't matter, and ignores the actual bottlenecks that prevent AI from delivering business results. This isn't a minor calibration issue requiring tweaks around the edges. It represents a fundamental misalignment between how the industry teaches AI development and how successful intelligent products actually emerge. The contrarian reality is that the most impactful AI implementations often violate the standard best practices, while meticulously engineered pipelines following every recommended pattern frequently deliver minimal value despite substantial investment.

The Conventional Wisdom Is Wrong

The dominant narrative about AI Product Development Pipelines emphasizes infrastructure, tooling, and process maturity. Industry thought leaders advocate for sophisticated MLOps platforms, automated retraining workflows, extensive A/B testing frameworks, and data governance systems before attempting production deployments. The implicit message is that AI development requires industrial-grade engineering practices from day one, and that shortcuts inevitably lead to technical debt, model degradation, and failed initiatives.

This perspective fundamentally misunderstands the nature of uncertainty in AI product development. Unlike traditional software where requirements can be specified upfront and implementations verified against specifications, intelligent capabilities operate in domains where neither the optimal solution nor the actual value is knowable before deployment. You cannot determine through analysis whether a recommendation system will increase engagement or whether a predictive model will improve operational efficiency. These questions have empirical answers that only emerge through real-world usage.

The conventional approach treats this uncertainty as a risk to be mitigated through planning and process. The contrarian insight is that uncertainty represents an opportunity to be exploited through rapid experimentation. Organizations that delay deployment until they've built comprehensive pipelines sacrifice months or years of learning in exchange for infrastructure that may prove unnecessary. Meanwhile, competitors who deploy imperfect solutions quickly begin accumulating the usage data, user feedback, and operational insights that actually matter for building valuable AI capabilities.

The False Promise of Best Practices

Best practices emerge from observing successful implementations and attempting to extract generalizable patterns. The AI industry has largely borrowed its best practices from technology giants operating at scales and complexities that don't apply to most organizations. Google, Meta, and Amazon serve billions of users with hundreds of models requiring specialized infrastructure that justifies sophisticated tooling. Applying their practices to products serving thousands of users with a single model wastes resources on problems you don't have while ignoring challenges that actually impede progress.

Why Traditional Pipelines Create More Problems Than They Solve

The standard AI Product Development Pipelines introduce several categories of overhead that slow iteration without corresponding benefits for most use cases. First, they demand specialized expertise in infrastructure tools, workflow orchestration, and distributed systems that have minimal connection to actually building valuable AI features. Teams spend months learning Kubernetes, configuring ML platforms, and debugging deployment pipelines before they've validated that the AI capability they're building actually matters to users.

Second, elaborate pipelines create coordination costs that reduce iteration velocity. Each experiment requires configuring workflow definitions, updating schemas, versioning artifacts, and coordinating across multiple systems. What should be a quick test of a different model architecture becomes a multi-day exercise in pipeline engineering. The cognitive overhead of managing complex tooling actively prevents the rapid experimentation that drives AI innovation.

Third, comprehensive pipelines optimize for operating many models at scale rather than discovering whether a single model creates value. They solve for challenges that only emerge after you've achieved product-market fit with your AI capability: managing dozens of model variants, serving millions of requests per second, coordinating retraining across data centers. For organizations still trying to build their first valuable AI feature, these capabilities represent expensive distractions from the actual work of understanding user needs, evaluating model performance, and iterating toward impact.

The Hidden Opportunity Cost

The most significant cost of traditional AI Product Development Pipelines isn't the time and money invested in building infrastructure. It's the opportunities never pursued because teams cannot iterate fast enough to explore the solution space. When each experiment requires substantial engineering effort, teams naturally become conservative, testing only variations of approaches they're confident will work. This risk aversion prevents the exploratory work that often yields breakthrough insights about what's actually valuable. Organizations with lighter-weight processes ship more experiments, learn faster, and discover non-obvious applications that would never survive a rigorous prioritization process focused on certain wins.

The Hidden Cost of Over-Engineering

Over-engineering in AI development manifests as investment in capabilities that address hypothetical future needs rather than current bottlenecks. Teams build automated retraining pipelines before they've manually retrained a model even once and discovered whether retraining actually improves performance. They implement sophisticated monitoring dashboards before they understand what metrics matter. They construct elaborate feature stores before they know which features drive model quality.

This premature optimization stems from anxiety about technical debt and the desire to "do things right" from the beginning. The paradox is that without deployment experience and usage data, you cannot know what "right" actually means for your specific context. The monitoring metrics that matter for a fraud detection system differ entirely from those relevant to content recommendations. The retraining frequency optimal for demand forecasting may be completely wrong for customer churn prediction. These insights emerge from operating systems in production, not from architectural planning.

Modern Product Development teaches us to build minimum viable products that test hypotheses quickly rather than comprehensive solutions that anticipate every possible requirement. AI development should follow the same principles. Deploy the simplest possible implementation that could demonstrate value, instrument it to capture actual usage patterns, then invest in infrastructure only where you've identified proven bottlenecks. This approach ensures engineering effort flows toward problems you actually have rather than ones you imagine you might encounter.

When Sophistication Matters

This isn't an argument against engineering rigor or sophisticated tooling. Organizations operating at scale with mature AI products absolutely need robust pipelines, comprehensive monitoring, and automated workflows. The contrarian claim is about sequencing: build these capabilities in response to demonstrated needs, not speculatively. If you're retraining models weekly and the manual process consumes substantial data science time, invest in automation. If you're serving millions of predictions daily and latency impacts user experience, optimize your serving infrastructure. But don't build these systems before you've validated that the AI capability creates enough value to justify the investment.

A Better Framework: Adaptive AI Development

If traditional AI Product Development Pipelines optimize for the wrong objectives, what approach actually works? The alternative framework prioritizes learning velocity over process maturity, focusing on the fastest path to validated insights about what creates user value. This adaptive approach treats AI development as a discovery process rather than an engineering project, with distinct phases that evolve as understanding deepens.

The first phase focuses purely on validation: does any AI solution to this problem create meaningful value? Deploy the simplest possible model through the most expedient integration path, even if it requires manual processes, scheduled batch jobs, or other approaches that wouldn't scale. Instrument the deployment to capture user behavior, business metrics, and model performance. Run this experiment long enough to generate statistically meaningful results about whether the capability matters. Many initiatives should terminate here when data reveals that the AI feature doesn't move metrics that matter.

The second phase optimizes the validated capability, improving model quality, reducing latency, and enhancing user experience based on actual usage patterns observed in phase one. This is where sophisticated modeling techniques, architectural improvements, and initial automation become appropriate. You're no longer speculating about what might work; you're optimizing a system with proven value. Investments have clear ROI because you understand what improvements yield what outcomes.

The third phase scales the successful capability, building the robust infrastructure, automated workflows, and comprehensive monitoring that support high-volume, business-critical systems. Strategic AI Integration at this stage means treating the AI capability as core product infrastructure that demands engineering excellence. But you reach this phase only after proving value and optimizing performance, ensuring that infrastructure investments support capabilities that genuinely matter rather than speculative experiments.

Flexibility Over Standardization

Adaptive development embraces different approaches for different problems rather than mandating standardized processes. Some use cases benefit from continuous learning and frequent retraining; others perform best with static models updated quarterly. Some require real-time inference; others work fine with overnight batch processing. Allow teams to choose approaches matching their specific constraints rather than forcing every initiative through identical pipelines designed for different problems.

Real-World Evidence Supporting the Alternative Approach

Organizations that achieve meaningful AI impact often violate conventional wisdom about AI Product Development Pipelines. They deploy models through simple API calls to third-party services rather than building custom infrastructure. They retrain manually based on observed performance degradation rather than automated schedules. They integrate AI features through quick prototypes that bypass standard development processes, then retrofit engineering rigor only after proving value.

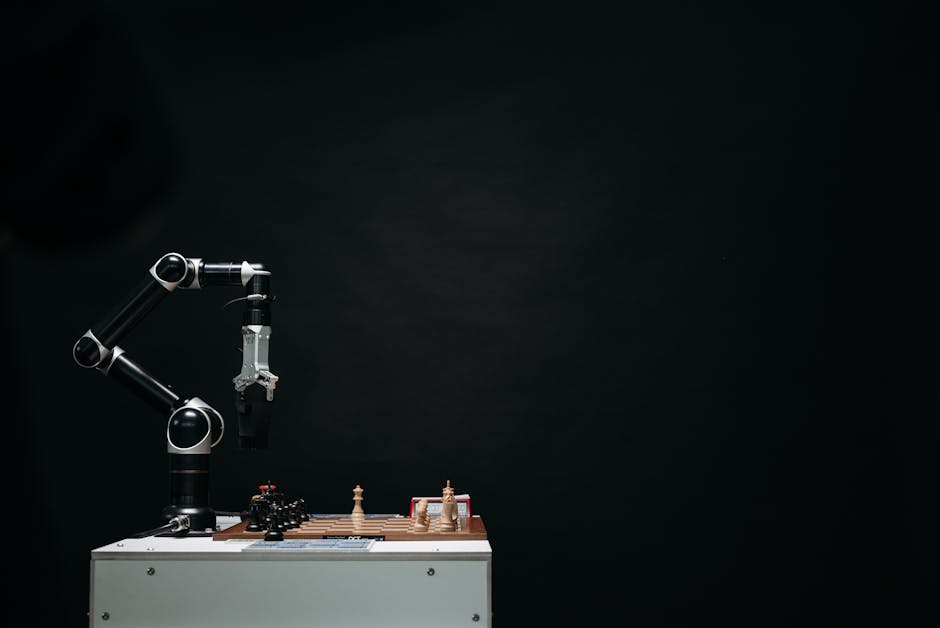

A financial services company spent eighteen months building a comprehensive ML platform with automated pipelines, sophisticated monitoring, and enterprise-grade governance before deploying their first model to production. Meanwhile, a competitor integrated a simple fraud detection model through a vendor API in six weeks, began capturing fraud patterns and false positive rates, and iterated toward a custom solution informed by real usage data. Twelve months later, the competitor had deployed five increasingly sophisticated fraud detection capabilities serving millions of transactions daily, while the first company was still onboarding their initial use case to their beautiful infrastructure.

A healthcare technology startup deployed AI-powered diagnosis assistance through a deliberately manual workflow: clinicians submitted cases through a form, data scientists ran inference overnight, results appeared the next morning. This approach couldn't scale, but it validated that clinicians found the suggestions valuable enough to incorporate into their workflows. That insight justified building automated real-time inference infrastructure. Had they invested in automation upfront, they would have built a fast system for delivering value that users didn't actually want.

The pattern across successful implementations is clear: they optimize for learning velocity early, then systematically invest in sophistication as needs emerge. They build pipelines to solve problems they have, not ones they anticipate. They treat AI development as hypothesis testing requiring rapid iteration rather than engineering projects requiring comprehensive planning.

The Role of Organizational Context

The adaptive approach works particularly well for organizations without existing AI capabilities or those exploring new use cases. It enables progress with small teams and limited resources by focusing effort on validation rather than infrastructure. For organizations with mature AI practices and established platforms, the calculus changes. Leveraging existing infrastructure makes sense when it actually accelerates deployment rather than adding overhead. The key insight is matching approach to context rather than following universal prescriptions regardless of circumstances.

Conclusion

The artificial intelligence industry needs to acknowledge an uncomfortable truth: the conventional wisdom about how to build AI Product Development Pipelines optimizes for problems most organizations don't have while ignoring the actual challenges that prevent valuable AI capabilities from reaching users. Elaborate infrastructure, sophisticated tooling, and comprehensive processes make sense for mature products operating at scale. They represent expensive distractions for teams still searching for product-market fit with their AI features. The contrarian approach advocated here prioritizes learning velocity, empirical validation, and adaptive investment over process maturity and speculative infrastructure. It treats AI development as discovery requiring rapid iteration rather than engineering requiring comprehensive planning. Organizations embracing this adaptive mindset deploy faster, learn more quickly, and achieve impact with fewer resources than those following standard playbooks. The path to successful AI products runs through validated user value, not through perfect pipelines. Build the minimal infrastructure necessary to test hypotheses quickly, invest in sophistication where you've proven it matters, and resist the siren call of best practices divorced from your specific context. Those willing to challenge conventional wisdom and embrace AI Integration Strategies grounded in empirical learning rather than industry dogma will discover that the fastest path to AI impact often violates everything the experts recommend, and that's precisely why it works.

Comments

Post a Comment